Summary

Recent work has generalized neural rendering to passive sensors like radar, but these approaches are fundamentally limited by the lower resolution and the 2D nature of radar's range-azimuth measurements. We present CaRaFe, a novel method which unfies camera and radar measurments into the same joint volumetric representation for the first time. Our insight was that camera and radar offer complementary signals: radar offers robust, reliable metric depth but lacks angular and any elevation resolution, while camera has high angular resolution with no innate depth. Our approach can reconstruct unbounded, in-the-wild automotive scenes with challenging narrow baselines, capturing geometry at the detail of camera but anchored to the metric scale of radar. We optimize CaRaFe with a novel view synthesis objective, applied to both active and passive volume rendering, with additional depth supervision losses that leverage the unique advantages of both sensors. We validate CaRaFe on real outdoor driving scenes across multiple datasets, confirming the robustness and generality of the method.

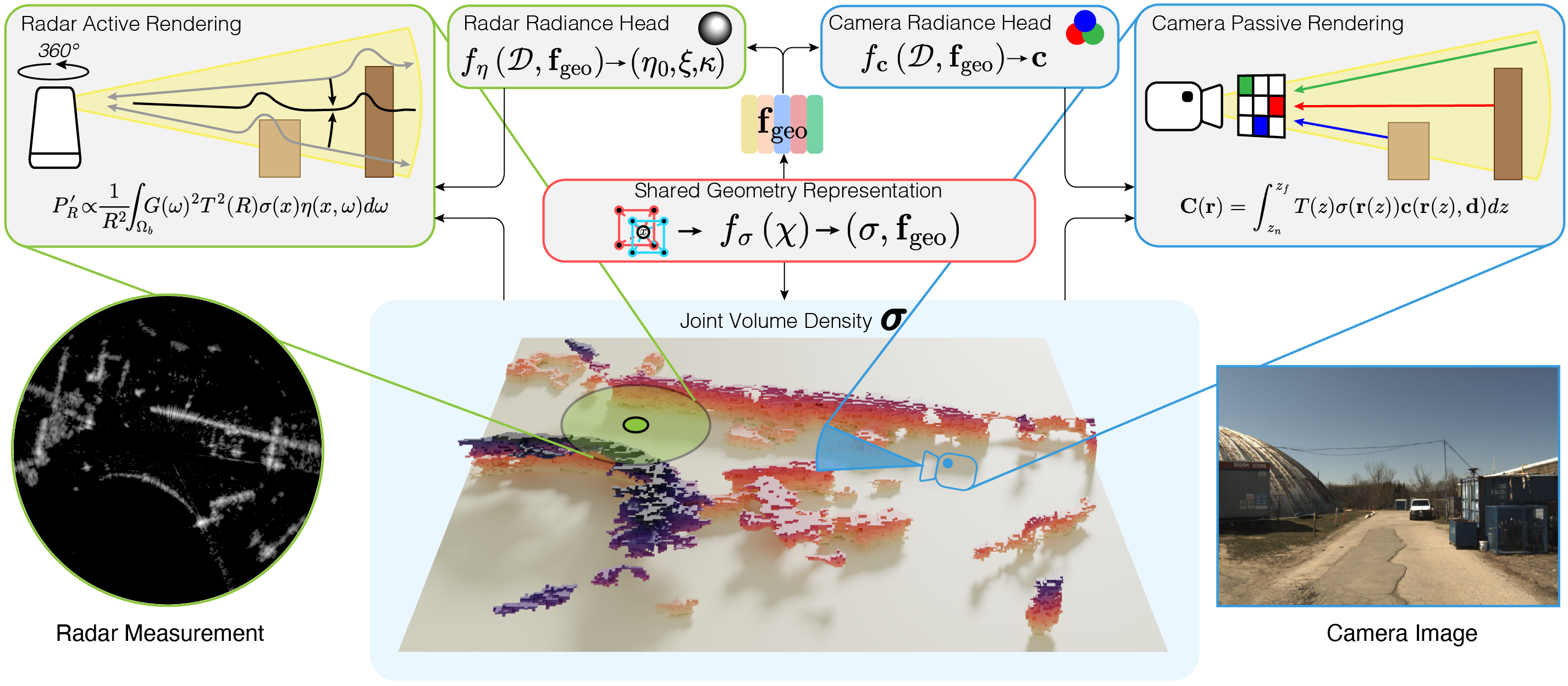

Learning a Unified, Multi-Modal Geometry Field

CaRaFe decomposes scenes into a shared multi-modal volume density and sensor-specific radiance heads. Optimization relies on a classic novel view synthesis objective, where alternating active and passive volume rendering passes allow complementary signals from camera and radar to integrate into the same geometry representation. By separately modeling the volumetric physics of radar sensing and camera sensing, we can simulate fundamentally different rendering processes and measurements from the same neural field.

CaRaFe Beats All Baselines

CaRaFe outperforms all tested camera and radar baselines on geometric fidelity, while remaining comparable if not better in novel view synthesis quality across both sensors. CaRaFe captures the dense, high resolution geometric signal from camera images, while leveraging the robust metric scale of radar data. Note that unlike a radar-only baseline which struggles to resolve target elevation, CaRaFe is able to disambiguate elevation using camera-dervied object cues.

Driving trajectory comparisons of 3D reconstructions against ground-truth RGB. This viewer is fully interactive — use the play/pause button and scrubber to navigate the trajectory. Drag dividers to resize comparison panels, or pause and click the scene to orbit freely. Use tabs to switch between sequences.

Individual baseline reconstructions. Note the voids and incorrect elevation assignment in the radar baselines, as compared to the floaters and distorted scene scale in the camera baselines.

BibTeX

@inproceedings{carafe,

title = {{CaRaFe}: Camera-Radar Radiance Fields for Scene Reconstruction},

author = {Borts, David and Ost, Julian and Basu, Shamik and Broedermann, Tim and Ramazzina, Andrea and Sakaridis, Christos and Bijelic, Mario and Heide, Felix},

booktitle = {ACM SIGGRAPH 2026 Conference Papers},

year = {2026},

}

Related Work

Radar Fields: Frequency-Space Neural Scene Representations for FMCW Radar

David Borts, Erich Liang, Tim Brödermann, Andrea Ramazzina, Stefanie Walz, Edoardo Palladin, Jipeng Sun, David Bruggemann, Christos Sakaridis, Luc Van Gool, Mario Bijelic, Felix Heide

SIGGRAPH 2024